fix: lagging issue in ask AI during message streaming#16201

fix: lagging issue in ask AI during message streaming#16201FelixMalfait merged 7 commits intomainfrom

Conversation

Greptile OverviewGreptile SummaryFixed lagging during AI message streaming by adding 100ms throttle to message updates and removing smooth scroll animation. The changes address performance issues during streaming by:

The throttle reduces the frequency of React re-renders during streaming, while the instant scroll prevents animation queuing that caused visual lag. These optimizations work together since Confidence Score: 5/5

Important Files ChangedFile Analysis

Sequence DiagramsequenceDiagram

participant User

participant AgentChat

participant useChat

participant Stream

participant ScrollEffect

participant DOM

User->>AgentChat: Send message

AgentChat->>useChat: sendMessage()

useChat->>Stream: Start streaming response

loop Every 100ms (throttled)

Stream->>useChat: Chunk received

useChat->>AgentChat: Update messages state

AgentChat->>ScrollEffect: Messages changed

ScrollEffect->>DOM: scrollTo({top, behavior: instant})

end

Stream->>useChat: Stream complete

useChat->>AgentChat: Final message state

AgentChat->>User: Display complete response

|

|

🚀 Preview Environment Ready! Your preview environment is available at: http://bore.pub:51890 This environment will automatically shut down when the PR is closed or after 5 hours. |

…ed AIChatTab and SendMessageButton components to utilize agentChatInputState for input value and change handling, improving state consistency across components.

…context structure for improved clarity and maintainability.

…amlining package management.

|

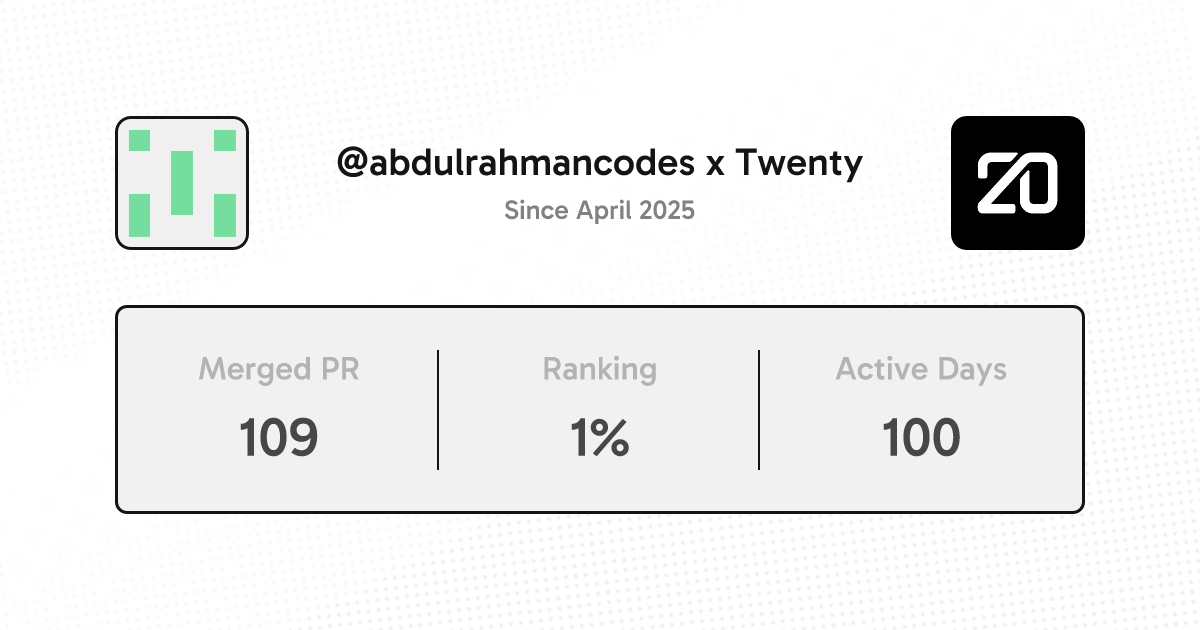

Thanks @abdulrahmancodes for your contribution! |

**Problem:** The AI chat was experiencing lag during message streaming because the messages array was being updated too frequently, causing all messages to re-render too quickly. **Solution:** Added the `experimental_throttle: 100` parameter to the `useChat` hook configuration. This throttles message updates during streaming to prevent excessive re-renders and improve performance. **Context:** The `useAgentChat` hook primarily returns values from the underlying `useChat` hook, so there wasn't significant room for improvement regarding the "umbrella hook" pattern. However, some unnecessary values were being returned that weren't needed. **Solution:** - Removed `input` and `handleInputChange` from the `useAgentChat` hook return. These weren't needed since input state is already managed directly via Recoil state (`agentChatInputState`) in components. <!-- CURSOR_SUMMARY --> --- > [!NOTE] > Throttle message streaming updates and switch AI chat input management from context to Recoil; minor scroll behavior tweak. > > - **AI Chat performance**: > - Add `experimental_throttle: 100` to `useChat` in `useAgentChat` to reduce re-render frequency during streaming. > - **State management**: > - Migrate input handling to Recoil via `agentChatInputState`; remove `input` and `handleInputChange` from `AgentChatContext` and `useAgentChat` returns. > - Update `AIChatTab` and `SendMessageButton` to read/write input from Recoil and adjust hotkeys/disabled state accordingly. > - **UX behavior**: > - Remove smooth scroll behavior in `useAgentChatScrollToBottom`. > > <sup>Written by [Cursor Bugbot](https://cursor.com/dashboard?tab=bugbot) for commit 911b341. This will update automatically on new commits. Configure [here](https://cursor.com/dashboard?tab=bugbot).</sup> <!-- /CURSOR_SUMMARY --> --------- Co-authored-by: Félix Malfait <felix.malfait@gmail.com>

Changes

1. Fixed streaming lag with throttle parameter

Problem: The AI chat was experiencing lag during message streaming because the messages array was being updated too frequently, causing all messages to re-render too quickly.

Solution: Added the

experimental_throttle: 100parameter to theuseChathook configuration. This throttles message updates during streaming to prevent excessive re-renders and improve performance.2. Cleaned up useAgentChat hook return values

Context: The

useAgentChathook primarily returns values from the underlyinguseChathook, so there wasn't significant room for improvement regarding the "umbrella hook" pattern. However, some unnecessary values were being returned that weren't needed.Solution:

inputandhandleInputChangefrom theuseAgentChathook return. These weren't needed since input state is already managed directly via Recoil state (agentChatInputState) in components.Note

Throttle message streaming updates and switch AI chat input management from context to Recoil; minor scroll behavior tweak.

experimental_throttle: 100touseChatinuseAgentChatto reduce re-render frequency during streaming.agentChatInputState; removeinputandhandleInputChangefromAgentChatContextanduseAgentChatreturns.AIChatTabandSendMessageButtonto read/write input from Recoil and adjust hotkeys/disabled state accordingly.useAgentChatScrollToBottom.Written by Cursor Bugbot for commit 911b341. This will update automatically on new commits. Configure here.